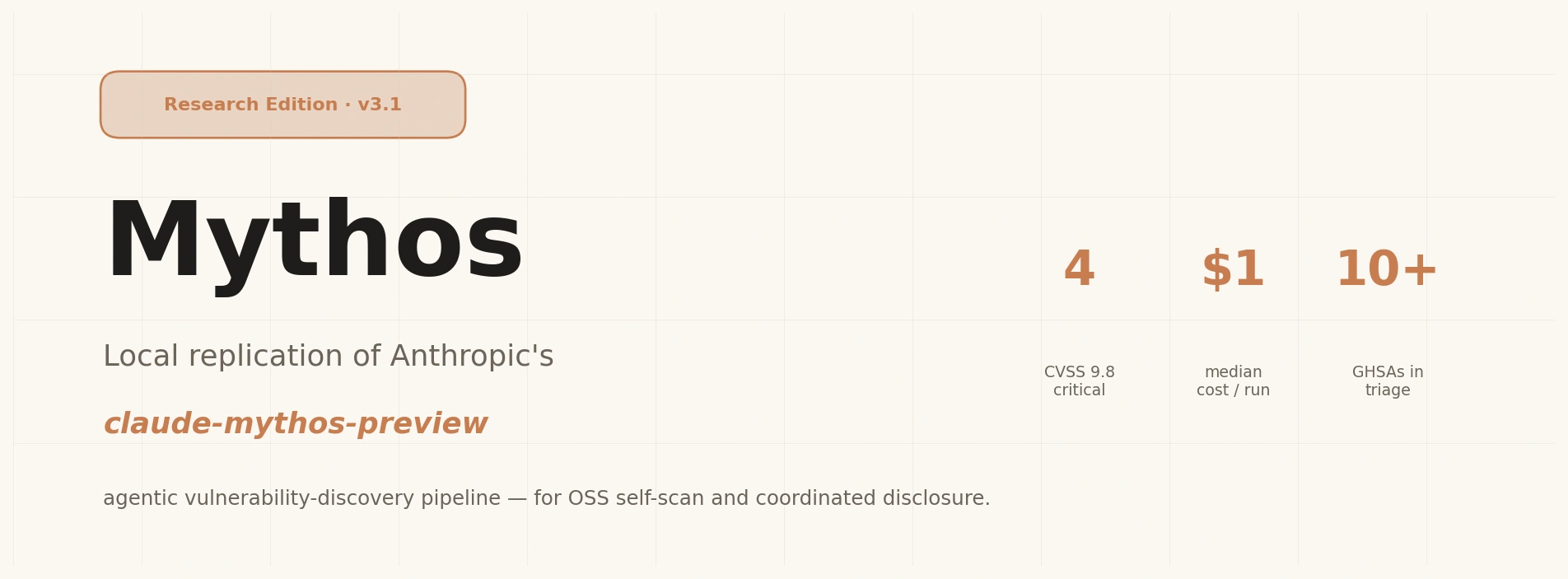

Claude Mythos refers to a frontier-class agentic security system introduced by Anthropic in early 2026, designed to autonomously discover and exploit software vulnerabilities at scale.

The system became widely discussed because of its reported ability to produce fully working remote code execution exploits from real-world codebases with minimal human guidance. In one described case, an engineer with no security background prompted the system overnight and woke up to a complete exploit chain.

Claude Mythos preview is reported to achieve:

- 93.9% on SWE-bench Verified

- 97.6% on USAMO-level math benchmarks

- 83.1% on CyberGym security tasks

More importantly, it has been described as capable of discovering zero-day vulnerabilities across major operating systems and browsers, which led to Anthropic restricting public access and instead launching Project Glasswing for controlled deployment to selected infrastructure partners.

This makes Mythos less of a typical model release and more of a controlled security capability.

Why Mythos feels like a shift in security research

Mythos represents a structural shift in how vulnerability research is performed.

Traditional security workflows rely on:

- static analyzers

- fuzzing systems

- manual code inspection

- exploit chaining by human experts

Mythos replaces much of this with an agentic loop that:

- prioritizes risky code regions

- reasons about data flow and input surfaces

- generates vulnerability hypotheses

- validates findings through tooling and secondary review

Instead of replacing security tools, it orchestrates them through an LLM-driven workflow.

What Mythos actually does under the hood

At a high level, Mythos operates through a structured multi-stage pipeline:

- A codebase is loaded into an isolated environment

- The system scans for high-risk file regions

- The model ranks files by vulnerability likelihood

- Focused analysis is performed on selected files

- A secondary agent validates findings

- Results are aggregated into structured reports

File risk is typically categorized as:

- constants, no meaningful risk

- internal utilities

- business logic

- input handling, databases, authentication

- network-facing or cryptographic components

The key design principle is prioritization: not all code is equally important.

How to recreate the Mythos pipeline

The open-source research scaffold at

https://github.com/Keyvanhardani/Mythos-research

implements a local, reproducible version of this workflow using general-purpose models like Claude Opus through the Claude Code CLI.

Created by Keyvan Hardani — Applied AI Researcher and Engineer, the system focuses on structured vulnerability discovery rather than exploitation.

The pipeline is divided into seven parameterised phases. Phases 0–4 and 6 are open in this edition. Phase 5 (live execution validation) is intentionally excluded for safety and research scope reasons.

Phase 0: Language detection

The system identifies the dominant programming language in the target repository. This determines which vulnerability semantics prompt is used, such as language-specific rules for unsafe memory handling, injection patterns, or deserialization risks.

Phase 1: Sink-guided slicing

A curated sink catalog (e.g. scripts/lib/sinks/*.txt) is executed over the codebase using fast search tooling. This produces structured NDJSON entries like:

- category

- pattern

- file

- line

- code snippet

This step dramatically reduces search space before any reasoning begins.

Phase 2: File ranking

Files are scored based on sink density and risk category distribution. High-signal categories dominate ranking:

- deserialization issues

- code evaluation (eval-like sinks)

- SQL injection surfaces

- prototype pollution

- XXE vulnerabilities

- unsafe framework patterns

- input sanitisation gaps

- browser API misuse

Files containing only safe variants (e.g. SAFE_* patterns) are deprioritised.

Phase 3: Agentic hunt

A separate Claude Code subagent is launched per high-ranked file. Each agent receives:

- sink context for that file

- vulnerability semantics prompt (VSP)

- optional diversity hint for exploration variation

These agents independently search for vulnerabilities in parallel.

Phase 4: Skeptical validation

Each candidate finding is re-evaluated by a second-pass agent acting as a skeptical reviewer. It reassesses:

- correctness of the vulnerability

- exploitability

- false positive likelihood

Output labels include:

- CONFIRMED

- FALSE_POSITIVE

- DOWNGRADED

- NEEDS_MORE_INFO

Phase 5: Live execution (excluded in this repo)

This stage performs runtime validation of exploits in a controlled execution environment. It is intentionally omitted from the public repository to avoid turning the scaffold into an automated exploitation system.

Phase 6: Aggregation

All results are compiled into structured JSON reports containing:

- severity breakdown

- per-phase telemetry (cost, runtime, hits)

- validation outcomes per finding

- deduplicated vulnerability summaries

Running your own Mythos locally with Claude Opus

Once dependencies and Claude Code CLI are installed:

# 1) clone

git clone https://github.com/Keyvanhardani/mythos-research.git

cd mythos-research

# 2) make sure Claude Code CLI is available

claude --version

# 3) run against a target directory (read-only)

bash scripts/mythos-v3.sh /path/to/target --max-files 8 --budget 3.00

# optional: diverse sampling (K independent hunters per file)

bash scripts/mythos-v3.sh /path/to/target --pass-at-k 3

# optional: skip everything that would need exec-validator.sh

bash scripts/mythos-v3.sh /path/to/target --skip-exec

Optional flags:

--pass-at-k 3 → multiple independent analysis runs per file--skip-exec → disables execution-related validation--budget → caps total run cost

Reports are stored in:

reports/<scan-id>/summary.json

Once started this will look similar to the result of my tiny astronaut simulation game:

mythos-research % bash scripts/mythos-v3.sh ../astra-nova

==========================================================

mythos-v3 | scan mythos3_20260424_083334_3957

target : /Volumes/X/Projects/astra-nova

model : claude-opus-4-7

budget : $3.00 per hunter, max 8 hunters

report : /Volumes/X/Projects/mythos-research/reports/mythos3_20260424_083334_3957

==========================================================

[08:33:34] Phase 0 — language detection

[08:33:37] detected: c#

[08:33:37] Phase 1 — sink slicing

sink-slicer: 76 hits → /Volumes/X/Projects/mythos-research/reports/mythos3_20260424_083334_3957/slices/

[08:33:49] 76 sink hits

[08:33:49] Phase 2 — file ranking

[08:33:49] selected 8 files

[08:33:49] Phase 3 — agentic hunt (parallel)

[08:33:49] launch 1/8 k=1/1 : app/Services/AstronautTrainingService.cs

[08:33:49] launch 2/8 k=1/1 : app/Planning/MissionScheduler.cs

[08:33:49] launch 3/8 k=1/1 : app/Telemetry/TelemetryIngestionPipeline.cs

[08:33:49] launch 4/8 k=1/1 : app/AI/CrewEvaluationEngine.cs

[08:33:49] launch 5/8 k=1/1 : app/Integrations/ResearchDataConnector.cs

[08:33:49] launch 6/8 k=1/1 : app/Simulations/TrainingSimulationEngine.cs

[08:33:49] launch 7/8 k=1/1 : app/Core/WorkflowOrchestrator.cs

[08:33:49] launch 8/8 k=1/1 : app/Core/AstraNovaWorkflowEngine.cs

✓ app/Services/AstronautTrainingService.cs

✓ app/Planning/MissionScheduler.cs

✓ app/Telemetry/TelemetryIngestionPipeline.cs

✓ app/AI/CrewEvaluationEngine.cs

✓ app/Integrations/ResearchDataConnector.cs

✓ app/Simulations/TrainingSimulationEngine.cs

✓ app/Core/WorkflowOrchestrator.cs

✓ app/Core/AstraNovaWorkflowEngine.cs

[08:35:21] progress 1/8 hunters complete

[08:35:21] progress 2/8 hunters complete

[08:35:21] progress 3/8 hunters complete

[08:35:21] progress 4/8 hunters complete

[08:35:21] progress 5/8 hunters complete

[08:35:21] progress 6/8 hunters complete

[08:35:21] progress 7/8 hunters complete

[08:35:21] progress 8/8 hunters complete

[08:35:21] Phase 4 — validation

[08:35:21] Phase 5 — live-exec validation (min-severity=HIGH)

[08:35:21] WARN: exec-validator.sh missing or not executable; skipping phase 5

[08:35:21] Phase 6 — aggregate

==========================================================

SCAN COMPLETE

summary : /Volumes/X/Projects/mythos-research/reports/mythos3_20260424_083334_3957/summary.json

logs : /Volumes/X/Projects/mythos-research/logs/mythos3_20260424_083334_3957/

==========================================================

Inside the created reports and logs directories you will the findings. For example for the class app/Services/AstronautTrainingService.cs it looks like:

{

"findings": [

{

"severity": "LOW",

"title": "Non-critical logging verbosity in training initialization",

"location": "L118 initializeTrainingSession()",

"description": "Training session initialization logs full simulation metadata (astronaut role, scenario ID, and environment preset) at INFO level. While no sensitive data or secrets are present, the verbosity may slightly increase log noise in high-throughput simulation runs."

}

],

"verdict": "PASS_WITH_MINOR_ISSUE",

"notes": "All execution paths in AstronautTrainingService.cs operate on internally generated simulation data with no user-controlled or external inputs. Scenario configuration and telemetry streams are strictly sandboxed and deterministic. No injection points, unsafe deserialization, or privilege boundary crossings were identified. The only issue is a low-severity logging verbosity concern that does not impact security posture."

}

What you can realistically expect (and what you cannot)

| Capability | Performance in Mythos-style systems |

|---|

| Crash-level bugs | strong |

| Input validation issues | strong |

| Logic vulnerabilities | moderate to strong |

| Full exploit chains | limited |

| Multi-step memory corruption exploitation | weak |

The system is strongest at discovery and classification, not full exploit engineering.

Why this matters for game developers

For game developers, this approach is especially relevant in:

- multiplayer networking code

- modding or scripting interfaces

- serialization layers (save systems, replay systems)

- backend APIs and authentication logic

It helps surface:

- client trust violations

- unsafe deserialization in save files

- scripting engine escape vectors

- network desync exploit paths

It is particularly useful as a pre-release security layer that sits before manual penetration testing.

Claude Mythos demonstrates a broader shift in software security: The value is no longer in isolated tools or prompts, but in structured reasoning pipelines.

The Mythos Research repository shows that even without proprietary internal models, a large part of this capability can be reproduced through:

- decomposition of tasks

- sink-driven prioritization

- multi-agent orchestration

- skeptical validation loops

In practice, it turns a general-purpose language model into a coordinated security research system, one that developers can now experiment with directly.