What Are AI-Hallucinated Dependencies?

AI hallucination occurs when a language model, such as GitHub Copilot or ChatGPT, produces confident but factually incorrect or non-existent information. In coding contexts, this can manifest as:- Suggesting package names that do not exist

- Calling functions or methods that aren't part of any official API

- Recommending outdated, deprecated, or vulnerable dependencies

- Linking to GitHub repositories or modules that have never existed

text-analyzer-helper from npm, even though no such package exists.

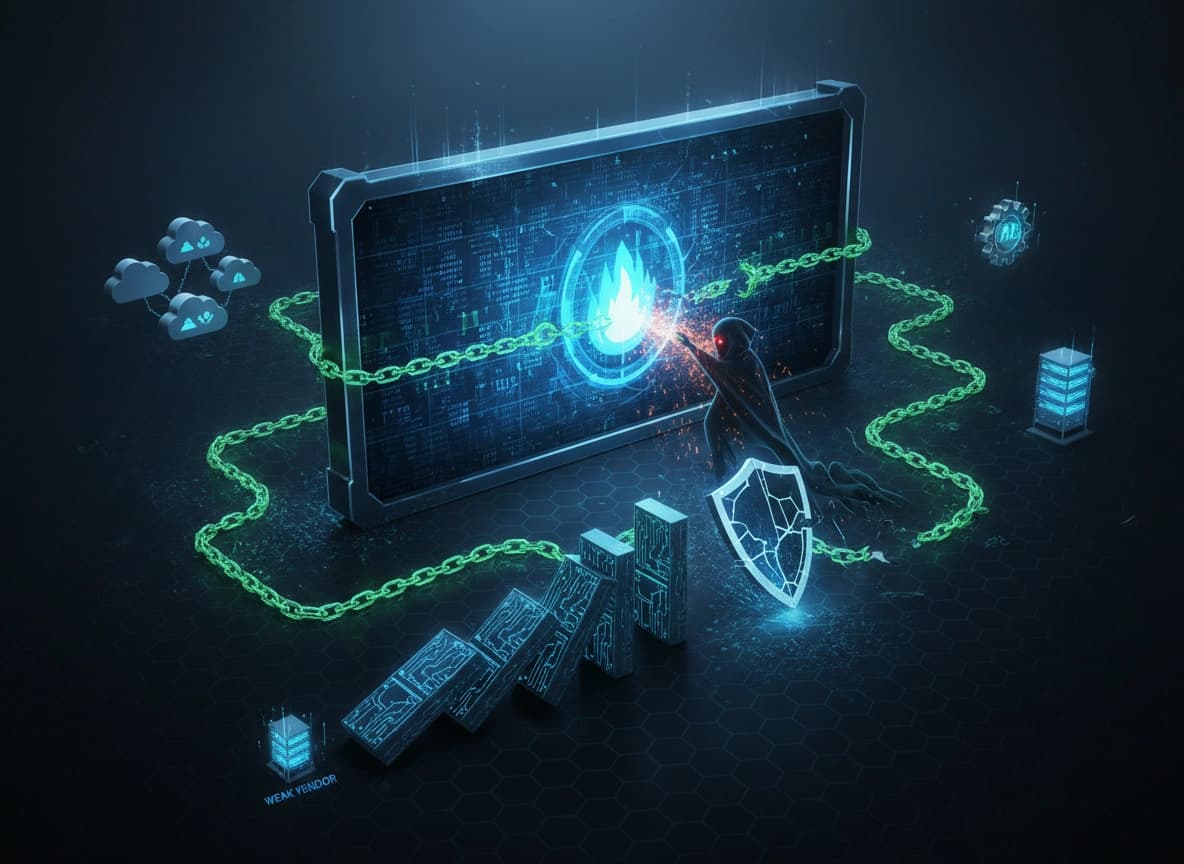

How Hackers Can Exploit This

If an attacker discovers that ChatGPT has recommended a non-existent package, they can quickly publish a malicious package under that name. The next time a user asks a similar question, ChatGPT might suggest the attacker’s now-real, but harmful, package. This tactic exploits AI-generated hallucinations, allowing attackers to distribute malicious code without relying on traditional methods like typosquatting or impersonating legitimate libraries. As a result, harmful code can end up in real applications or trusted repositories, posing a serious threat to the software supply chain. A developer seeking help from a tool like ChatGPT could unknowingly install a malicious library simply because the AI assumed it existed, and the attacker made that assumption true. Some attackers might even create functional, Trojan-like libraries that appear legitimate, increasing the chances they’ll be widely adopted before their true nature is uncovered. Once installed, these malicious packages can carry out a range of harmful actions. They may:- Steal environment variables or sensitive credentials

- Open backdoors to allow unauthorized access

- Silently log and exfiltrate data